The internet is full of robots, and their ranks are growing exponentially. In 2015 roughly half of all internet traffic was bots, so it’s important to pay attention to how robots interact with your site. These robots are software that automatically browse the internet. The most common kind of robot crawls the internet and indexes content from search engines, but there are also bad bots, which harvest emails or search for sites that are weak against mass hacking attempts. Luckily, you can use a robots.txt file to communicate with all of these non-human netizens, and in some cases even give them instructions on how to use your site. Read more

Website Optimization Tutorials

Chow-Bryant’s articles on website optimization. Learn how to optimize your website for crawlability and page load times. Use these guides to optimize files on your website like Robots.txt and .htaccess.

Code Snippets on GitHub

If you’re on GitHub check out our repository for code snippets to help optimize your website.

Posts

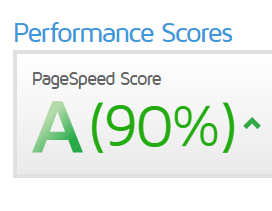

Why Page Speed Matters

As much as I’d like to jump straight into the code snippets that can help speed up your website, it’s important to define why load times matter. In 2010, Google revealed site speed was a ranking factor the company considered when choosing which sites to show in search results. Quoting that article:

Speeding up websites is important — not just to site owners, but to all Internet users. Faster sites create happy users and we’ve seen in our internal studies that when a site responds slowly, visitors spend less time there. But faster sites don’t just improve user experience; recent data shows that improving site speed also reduces operating costs.

It’s hard to argue with happy users, better rankings, and reduced operating costs, especially when it may just take a few minutes to accomplish. Now, enough with definitions, let’s jump straight to why you’re still reading. Read more